Quality

The Swiss Army Knife of Manufacturing

June 22, 2020

For decades building a data center worked a lot like stacking pizzas in a pizzeria… you could easily slide any server into a standard slot, much the same way you could slide any pizza into a standard oven or a box.

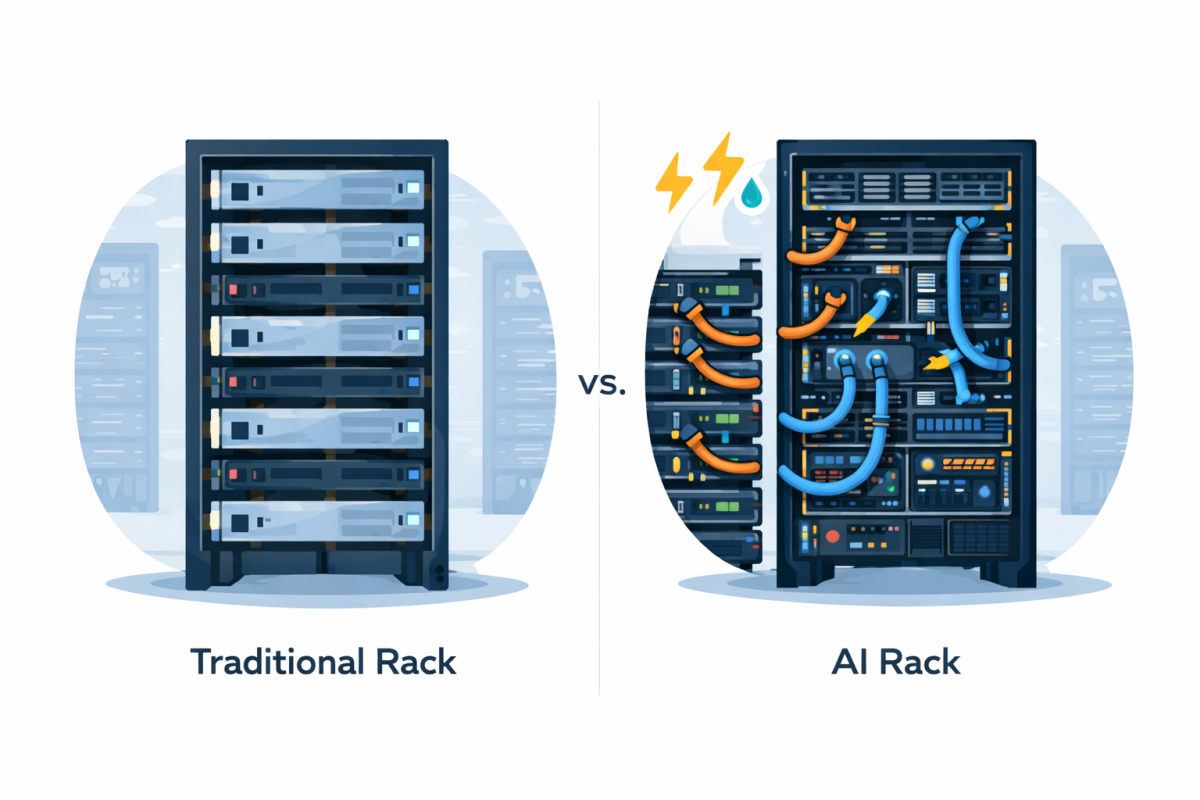

But as AI models exploded in size, server rooms began to look less like “Mystic Pizza”, and more like “The Manhattan Project”. Almost overnight, hardware morphed into high-performance beasts that hummed with enough electricity to power an entire neighborhood.

With the sudden need to accommodate AI hardware, engineers found themselves in a desperate “space race.” But instead of going to the moon or launching Sputnik, engineers were cramming massive GPUs and thick liquid-cooling hoses into narrow metal cabinets that were never designed to hold them.

And even if they were somehow crammed in, these AI machines could then generate heat so intense that they could warp traditional metal frames. It soon became obvious that the entire infrastructure needed to be rethought.

A leader in AI with their Llama models, Meta (along with everyone else in the industry) realized that the old shelves wouldn’t cut it for these industrial-scale engines; necessitating a leap to a wider, reinforced chassis that could handle the raw physical demands of AI era hardware.

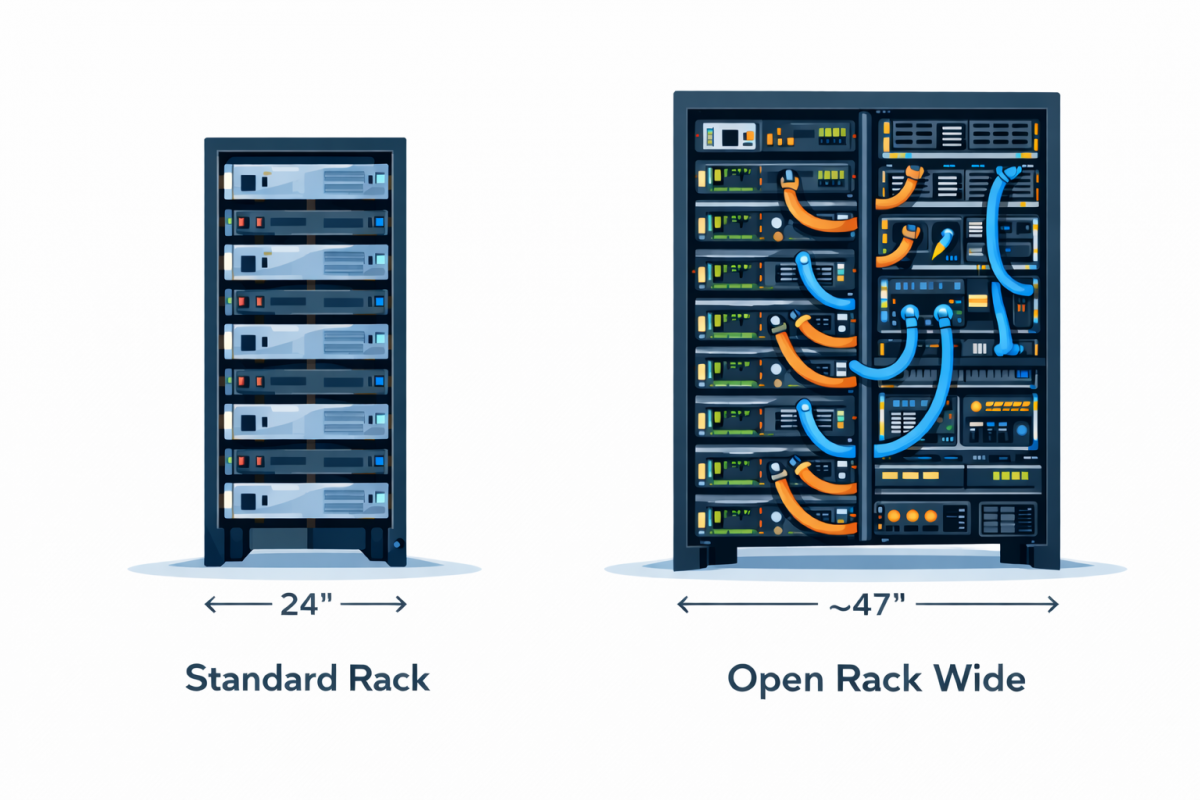

Meta realized that to keep AI growing, they had to stop treating the rack as an afterthought, and treat it more like a key bottleneck in their business. They did this by designing and building the Open Rack Wide (ORW) like a heavy-duty chassis that could breathe, move heat, and survive the raw mechanical stress (both heat and load) of the most powerful computers ever built.

Meta’s ORW design completely rethought the physical “shelves” that AI computers sit on, allowing for vastly more power‑hungry, cooling intensive systems.

So instead of the old, narrow racks built for web servers, these new wider racks (which have specs more like an engine chassis) make room for lots of AI chips, along with the heavy-duty cooling they need so they don’t overheat.

The ORW also rearranges how power is delivered, with one cabinet focusing on feeding electricity, with another focusing on hosting the compute machines. This configuration makes it easier to scale up and to use the space in a data center more efficiently.

To handle the massive energy intake these machines require, the internal power architecture transitioned in the third iteration (ORv3) to a high-voltage 48v system (up from 12v), which slashes energy waste and keeps the massive electrical current stable (note, the ORW is effectively a double wide iteration of the ORv3).

This wider footprint isn’t just about size; it’s about creating a “thermal highway” for the complex liquid-cooling plumbing that keeps silicon from melting. By separating the power delivery into its own dedicated cabinet alongside the compute machines, the design prevents electrical heat from compounding the temperature of the processors.

This layout also solves signal reach limit problems by keeping chips close enough to communicate with each other over high-speed copper cables before the data loses its strength.

Structurally, the rack has evolved into a modular, bolt-together chassis capable of surviving the constant mechanical vibration and thermal expansion of a high-output environment. It also acts as a standardized docking station, allowing operators to swap in the latest GPUs without having to re-plumb anything.

The result is a rugged, future-proof foundation that treats the rack as a high-performance engine block rather than a simple shelf. This all means the ORW has bridged the gap between “impossible” AI demands and the cold, hard reality of data center physics.

The most recent iteration of this rack, ORW, is physically imposing, measuring approximately 47 inches wide (twice the standard 24-inch width) and is engineered to support equipment weighing as much as 3,080 pounds (see section 6.2)!

To feed this dense machinery, the design uses high-voltage direct current inputs and specialized liquid-cooled busbars that carry electricity directly to the power-hungry hardware.

This robust structure ensures that the rack can safely house the heavy cooling manifolds and massive power shelves required for frontier AI training.

While some companies build closed, proprietary systems that lock customers into a single vendor, Meta’s initiative promotes industry-wide collaboration through the Open Compute Project.

In this model, the core server and rack designs are published openly, so anyone can inspect, implement, or improve them rather than being forced to buy tightly controlled, black-box hardware. This shifts power away from a few large vendors and toward a broader ecosystem of manufacturers, cloud providers, and end users who all benefit from shared, standardized designs.

This open approach also allows manufacturers such as AMD to build interoperable systems, like the Helios AI server, which fits seamlessly into the Open Rack Wide standard. So instead of redesigning everything from scratch, hardware vendors can plug their innovations into a known mechanical and electrical framework; cutting down integration time and reducing risk for customers.

For buyers, this standardization means they can mix and match CPUs, GPUs, and other components from different suppliers without rebuilding their entire data center layout.

As data centers evolve from “pizza ovens” into high-performance engine blocks, Prismier is the manufacturing partner that can help turn those Open Rack Wide blueprints into an actual heavy-duty reality.

Prismier specializes in the high-precision fabrication required for these builds: from the beefy reinforced frames that hold 3,000+ pounds, to the intricate bits that handle cabling and liquid cooling.

With AI “goliath” farms popping up like mushrooms, the only thing we know for sure is that the specs will change again, and soon… which means you need a partner who can pivot as fast as the industry does.

By sharing these designs, the industry avoids “vendor lock-in,” ensuring that different companies can innovate on the same physical foundation. This means that a data center that starts with one vendor’s hardware can gradually introduce another vendor into the same racks while using the same power and networking layout.

Over time, this flexibility encourages price competition, faster iteration, and more experimentation, because no single company controls the entire stack or the upgrade path.

Prismier is built to move at your speed, ensuring that whatever the next "big thing" is, you’ve got the manufacturing backbone to support it.